Here's a study supported by the objective reality that many of us experience already on sexist eroticismYouTube.

The streaming video company's recommendation algorithm can sometimes send you on an hours-long video binge so captivating that you never notice the time passing. But according to a study from software nonprofit Mozilla Foundation, trusting the algorithm means you're actually more likely to see videos featuring sexualized content and false claims than personalized interests.

In a study with more than 37,000 volunteers, Mozilla found that 71 percent of YouTube's recommended videos were flagged as objectionable by participants. The volunteers used a browser extension to track their YouTube usage over 10 months, and when they flagged a video as problematic, the extension recorded if they came across the video via YouTube's recommendation or on their own.

The study called these problematic videos "YouTube Regrets," signifying any regrettable experience had via YouTube information. Such Regrets included videos "championing pseudo-science, promoting 9/11 conspiracies, showcasing mistreated animals, [and] encouraging white supremacy." One girl's parents told Mozilla that their 10-year-old daughter fell down a rabbit hole of extreme dieting videos while seeking out dance content, leading her to restrict her own eating habits.

What causes these videos to become recommended is their ability to go viral. If videos with potentially harmful content manage to accrue thousands or millions of views, the recommendation algorithm may circulate it to users, rather than focusing on their personal interests.

YouTube removed 200 videos flagged through the study, and a spokesperson told the Wall Street Journalthat "the company has reduced recommendations of content it defines as harmful to below 1% of videos viewed." The spokesperson also said that YouTube has launched 30 changes over the past year to address the issue, and the automated system now detects and removes 94 percent of videos that violate YouTube's policies before they reach 10 views.

While it's easy to agree on removing videos featuring violence or racism, YouTube faces the same misinformation policing struggles as many other social media sites. It previously removed QAnon conspiracies that it deemed capable of causing real-world harm, but plenty of similar-minded videos slip through the cracks by arguing free speech or claiming entertainment purposes only.

YouTube also declines to make public any information about how exactly the recommendation algorithm works, claiming it as proprietary. Because of this, it's impossible for us as consumers to know if the company is really doing all it can to combat such videos circulating via the algorithm.

While 30 changes over the past year is an admirable step, if YouTube really wants to eliminate harmful videos on its platform, letting its users plainly see its efforts would be a good first step toward meaningful action.

Topics YouTube

(Editor: {typename type="name"/})

LA Galaxy vs. Tigres 2025 livestream: Watch Concacaf Champions Cup for free

LA Galaxy vs. Tigres 2025 livestream: Watch Concacaf Champions Cup for free

Snakes have been hitchhiking on planes. Have a nice flight.

Snakes have been hitchhiking on planes. Have a nice flight.

The 'Civil War' AI controversy, explained

The 'Civil War' AI controversy, explained

Kunlun Tech launches the first LLM

Kunlun Tech launches the first LLM

Grim video of a starving polar bear could show the species' future

Grim video of a starving polar bear could show the species' future

'Severance' puts a spin on the Orpheus and Eurydice myth in its Season 2 finale

Throughout Severance Season 2, fans and critics alike have drawn connections between Mark (Adam Scot

...[Details]

Throughout Severance Season 2, fans and critics alike have drawn connections between Mark (Adam Scot

...[Details]

People are worried about this lost beluga whale that was spotted in London's River Thames

Benny the beluga whale is a long way from home.After sightings of the animal swimming in London's Ri

...[Details]

Benny the beluga whale is a long way from home.After sightings of the animal swimming in London's Ri

...[Details]

Geely’s Zeekr plans US stock market debut, aims to raise $1 billion · TechNode

Zeekr, a reputed electric vehicle brand under Chinese automaker Geely, plans to engage in discussion

...[Details]

Zeekr, a reputed electric vehicle brand under Chinese automaker Geely, plans to engage in discussion

...[Details]

Realme unveils GT5, an affordable smartphone with 24GB RAM · TechNode

On Monday, Chinese smartphone maker Realme unveiled its latest offering, the GT5, which boasts a bud

...[Details]

On Monday, Chinese smartphone maker Realme unveiled its latest offering, the GT5, which boasts a bud

...[Details]

Best earbuds deal: Save 20% on Soundcore Sport X20 by Anker

SAVE OVER $10:As of April 25, the Soundcore Sport X20 by Anker earbuds are on sale for $63.99 at Ama

...[Details]

SAVE OVER $10:As of April 25, the Soundcore Sport X20 by Anker earbuds are on sale for $63.99 at Ama

...[Details]

Stellantis mulls partnership with Chinese EV maker Leapmotor: report · TechNode

Stellantis is exploring cooperation possibilities, which could include an investment deal or strateg

...[Details]

Stellantis is exploring cooperation possibilities, which could include an investment deal or strateg

...[Details]

Taylor Swift's 'The Tortured Poets Department' is here and everyone is overwhelmed

Just as Swifties wrapped up their second listen of Taylor Swift's The Tortured Poets Departmentin th

...[Details]

Just as Swifties wrapped up their second listen of Taylor Swift's The Tortured Poets Departmentin th

...[Details]

Dating culture has become selfish. How do we fix it?

If you’re single and extremely online, you’ll have noticed a particular disdain for dati

...[Details]

If you’re single and extremely online, you’ll have noticed a particular disdain for dati

...[Details]

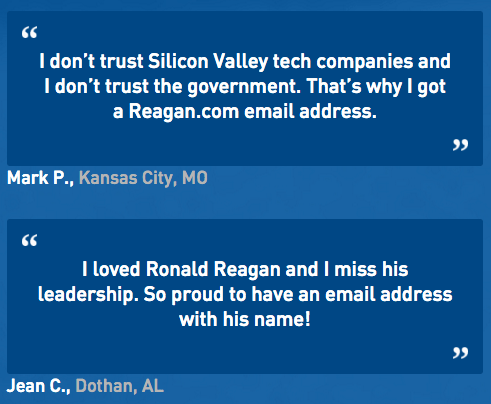

A worthless juicer and a Gipper-branded server

The Baffler ,April 21, 2017 Daily Baffleme

...[Details]

The Baffler ,April 21, 2017 Daily Baffleme

...[Details]

Best headphones deal: Take $90 off Skullcandy Crusher ANC 2 headphones at Amazon

GET $90 OFF: As of April 19, the Skullcandy Crusher ANC 2 over-ear noise-canceling headphones are av

...[Details]

GET $90 OFF: As of April 19, the Skullcandy Crusher ANC 2 over-ear noise-canceling headphones are av

...[Details]

The Best Gaming Concept Art of 2016

I'm one of the first to try Apple AirPlay in a U.S. hotel — 5 ways it makes travel better

接受PR>=1、BR>=1,流量相当,内容相关类链接。