DeepSeek has released a new paper,Lady Moon (2010) Full Movie Online with co-founder Liang Wenfeng credited as a contributor, detailing how its latest large language model DeepSeek-V3 achieves efficient training and inference using only 2,048 H800 GPUs – significantly fewer than the tens of thousands typically required. The team attributes this efficiency to four key innovations: memory optimization through multi-head latent attention (MLA), computational savings via a Mixture-of-Experts (MoE) design with FP8 precision, communication improvements using a multi-plane network topology, and faster inference through multi-token prediction (MTP). With MLA, KV cache memory usage is cut to just 70KB per token, up to 1/7 that of competing models. MoE architecture activates only 37 billion of the model’s 671 billion parameters per forward pass, reducing training costs by 90% compared to dense models. FP8 training further halves compute and memory usage, with minimal accuracy tradeoff. Beyond the model, the paper also outlines five future directions for AI hardware design, advocating for tighter integration between software and hardware to address memory, compute, and networking bottlenecks. [36Kr, in Chinese]

(Editor: {typename type="name"/})

The cicadas aren't invading the U.S.

The cicadas aren't invading the U.S.

Photography Incubabula: How Early Photographs Got in Books

Photography Incubabula: How Early Photographs Got in Books

Comfort TV: Notes on “The Great British Baking Show”

Comfort TV: Notes on “The Great British Baking Show”

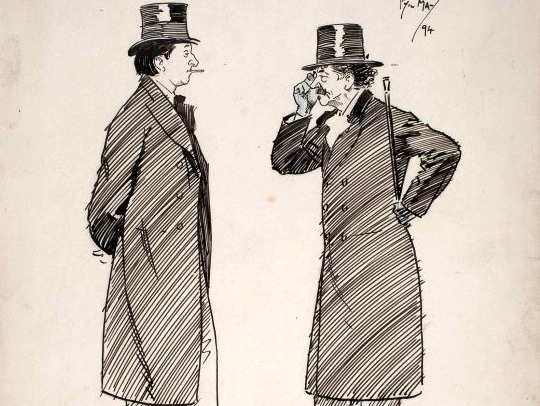

Too Clever: Oscar Wilde the Plagiarist

Too Clever: Oscar Wilde the Plagiarist

Best keyboard deals: Save on Asus gaming keyboards at Amazon

Best keyboard deals: Save on Asus gaming keyboards at Amazon

The Best Gaming Concept Art of 2016

Poem: Mark DeFoe, “Jan. 27, 1979”

Jan. 27, 1979By Mark DeFoeJanuary 27, 2016From the ArchiveDavid Hall, Broadcast Television Intervent

...[Details]

Jan. 27, 1979By Mark DeFoeJanuary 27, 2016From the ArchiveDavid Hall, Broadcast Television Intervent

...[Details]

Go Out in a Blaze of GloryBy W. D. SnodgrassJanuary 5, 2016From the ArchiveRobert Frost on a 1974 po

...[Details]

Go Out in a Blaze of GloryBy W. D. SnodgrassJanuary 5, 2016From the ArchiveRobert Frost on a 1974 po

...[Details]

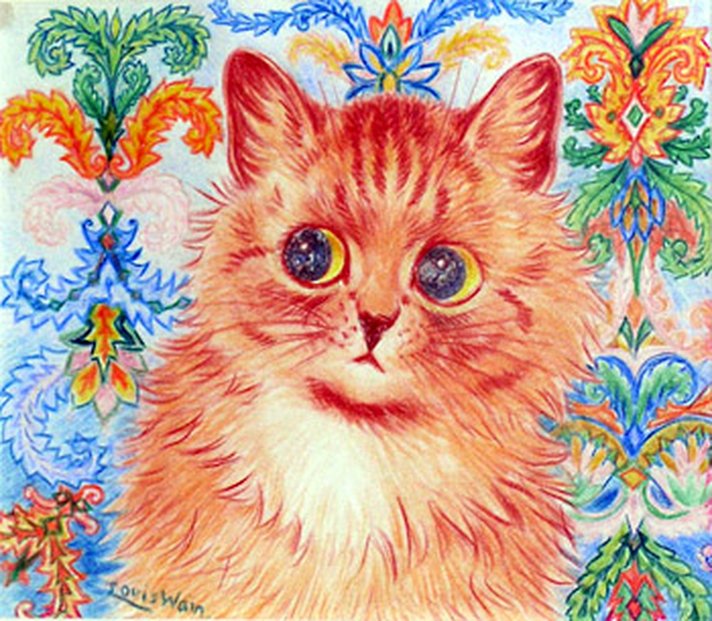

The Answers to Our Hink Pink Contest

Sixty Hink Pinks: The AnswersBy Dylan HicksJanuary 28, 2016Really Difficult Puzzles“Fat Cat” is the

...[Details]

Sixty Hink Pinks: The AnswersBy Dylan HicksJanuary 28, 2016Really Difficult Puzzles“Fat Cat” is the

...[Details]

Best robot vacuum deal: Save $200 on Eufy X10 Pro Omni robot vacuum

Save $200: As of May 16, the Eufy X10 Pro Omni robot vacuum is on sale for $699.99 at Amazon. That's

...[Details]

Save $200: As of May 16, the Eufy X10 Pro Omni robot vacuum is on sale for $699.99 at Amazon. That's

...[Details]

One Percent: Geoff Dyer on Photos of Income Inequality

One PercentBy Geoff DyerFebruary 2, 2016LookPhotographing inequality.A chef from a nearby luxury lod

...[Details]

One PercentBy Geoff DyerFebruary 2, 2016LookPhotographing inequality.A chef from a nearby luxury lod

...[Details]

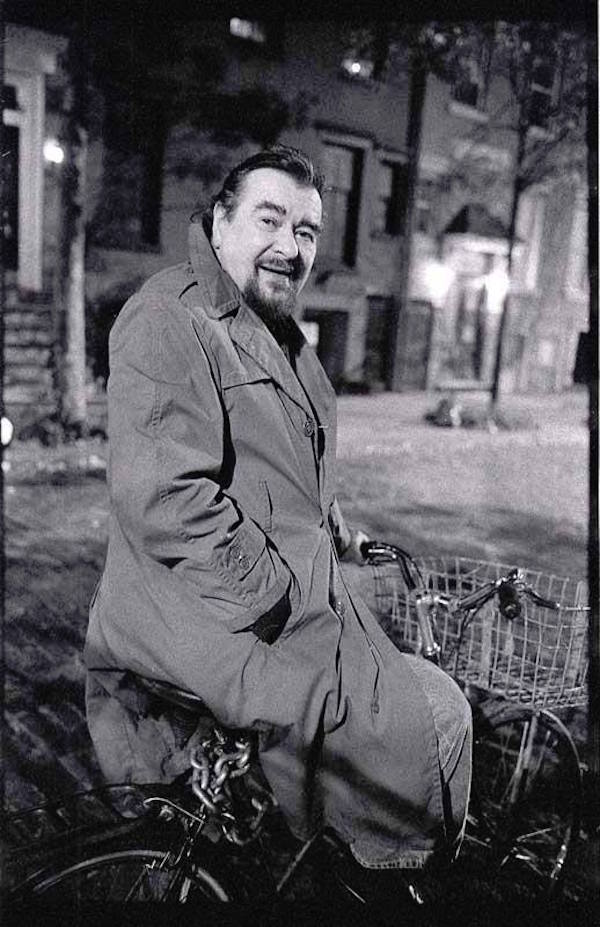

“More Rock and Roll! More Loud!” Giorgio Gomelsky, 1934–2016

The Last of the MohicansBy Brian CullmanJanuary 14, 2016First PersonRemembering Giorgio Gomelsky, 19

...[Details]

The Last of the MohicansBy Brian CullmanJanuary 14, 2016First PersonRemembering Giorgio Gomelsky, 19

...[Details]

Staff Picks: Raymond Pettibon, Jane Campion, Maggie Doherty

Staff Picks: Dissent, Deprogrammers, DogsBy The Paris ReviewJanuary 22, 2016This Week’s ReadingRaymo

...[Details]

Staff Picks: Dissent, Deprogrammers, DogsBy The Paris ReviewJanuary 22, 2016This Week’s ReadingRaymo

...[Details]

Bargaining For the Common Good

Interviews for Resistance

...[Details]

Interviews for Resistance

...[Details]

The Mr. Mantarian Subterfuge: A Story of Dog Boarding

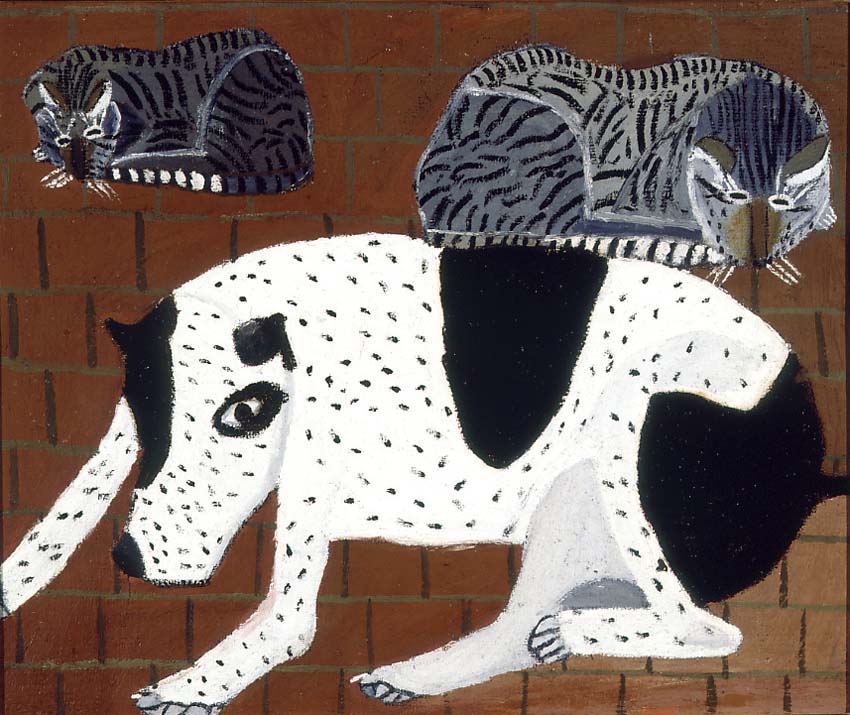

AliasBy Sadie SteinJanuary 12, 2016Our Daily CorrespondentAmador Lugo, Perro con Gatos, 1933.Back wh

...[Details]

AliasBy Sadie SteinJanuary 12, 2016Our Daily CorrespondentAmador Lugo, Perro con Gatos, 1933.Back wh

...[Details]

接受PR>=1、BR>=1,流量相当,内容相关类链接。